Nudification: drawing on evidence from Childline cases and online monitoring, Chris Sherwood argues for stronger legal duties on AI developers and platforms to prevent the generation of child abuse material.

At the NSPCC, we are deeply concerned about the dangers children are facing from artificial intelligence.

While AI presents wonderful opportunities, it also poses significant safety risks to young people.

It’s a powerful tool that can easily be misused. Generative AI has made it terrifyingly easy for offenders to create abuse material at scale.

We’re no longer talking about hypothetical dangers or future threats. The harm is happening right now, in real time, to real children, and the systems meant to protect them are nowhere near strong enough.

Nudification Harm is Already Happening

Reports of AI-generated child sexual abuse material are growing at an alarming rate. The Internet Watch Foundation recorded a fourfold increase in this material in their annual report published last year.

Perpetrators are using image generators and nudification apps to create hyper-realistic child sexual abuse material, which can be used to abuse and blackmail young people.

Publicly available open-source AI models, such as Stability AI and Black Forest Labs, have been exploited by perpetrators to create child sexual abuse material.

It’s likely we only know a fraction of these cases, as offenders can edit and manipulate open-source models out of sight of the platforms and law enforcement.

This is simply not good enough, and we can’t allow it to continue.

Recent reports that X’s Grok has been misused to create child sexual abuse material and enable the creation of semi-naked and naked images of adults and children are inexcusable, and they show that this illegal content can be generated on popular social media sites and then used to harm children.

Devastatingly, through Childline, we are hearing from young people who experience abuse caused by the misuse of generative AI.

One 16-year-old boy, who contacted the service, told us that a girl claiming to be his age made fake sexual images of him and threatened to share them to his friends unless he sent her £200.

We are also hearing firsthand from young people about the devastating impact on their safety, mental health, and wellbeing when nude images of them are created and shared.

Each contact we receive illustrates to me that much more needs to be done to address the harms children tell us they are experiencing because of the misuse of AI.

Where the Law Falls Short

Currently, there is a patchwork of different legislation that protects children against some AI risk. This includes the Online Safety Act, which goes some way to mitigating the dangers by requiring many AI companies to conduct risk assessments and remove AI-generated child sexual abuse material when detected.

However, many AI chatbots are not in-scope of the Act. Grok was alarmingly easy to exploit and put children at risk of having illegal material generated of them, which could be used to bully, extort or torment.

It’s clear that the Online Safety Act does not require services to robustly test their training data to ensure child sexual abuse material cannot be generated, before models are rolled out.

The Crime and Policing Bill, currently progressing through Parliament, has a number of new measures to tackle AI-generated child sexual abuse material, which we welcome. These include criminalising image generators that have been designed to create this illegal content and banning nudification apps.

Criminalising these functions is a positive step. However, I believe that a more preventative approach is needed to ensure this content is not created in the first place.

Without stronger, comprehensive safeguards, we leave loopholes that offenders can exploit, putting children at serious risk.

A Preventative Duty of Care for AI

The NSPCC is calling for the creation of a Statutory Duty of Care for Children’s Safety, ensuring that there are comprehensive protections in place for children across all AI products and services.

As part of this Duty of Care, AI developers would be required to robustly test their models to ensure that child sexual abuse material cannot be generated on their service.

This would mean ensuring no images of children are included in datasets and requiring platforms to work with trusted partners to safely test models using sets of known child sexual abuse material.

The UK’s world-leading AI Security Institute, which already conducts tests on some of the most-used AI platforms in the world, should also support the effort to protect children.

We believe their role should be expanded to help prevent the creation of child sexual abuse material.

This Duty of Care would also ensure that children are always protected when they interact with AI-generated content, such as being able to report fake nude images of themselves.

I also think that practical guidance for parents and education in schools should be provided to give everyone a better understanding of the risks of this new technology.

These requirements and new support would help ensure that services are held fully accountable for protecting children from this horrific type of online abuse and stopping illegal activity at source.

We must act now. Unless technology companies are compelled to use every tool available to combat this sinister and illegal abuse, children will continue to pay the price.

The digital world is a fundamental part of young people’s lives; they must be able to enjoy its benefits whilst staying safe.

Image Credit: Shutterstock

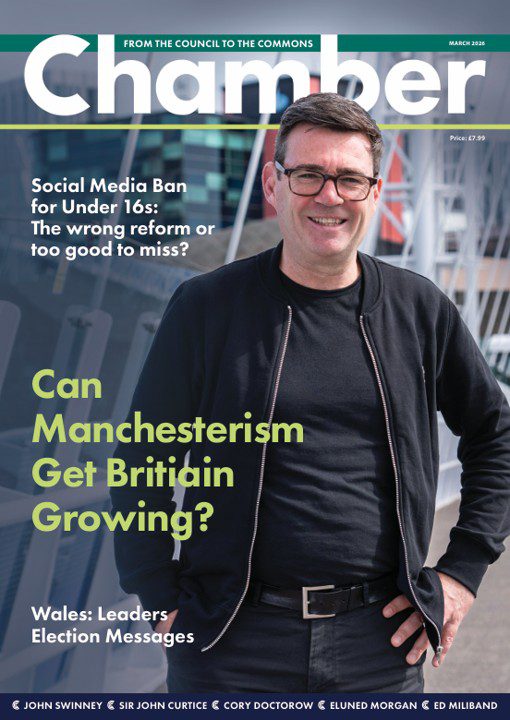

This article features in the new edition of ChamberUK. Our parliamentary journal.